Apinizer Installation and Configuration

This document explains the installation of the Apinizer API Management Platform.

1) Introduction

Apinizer is an application that works on Kubernetes Platform and consists of three components: API Manager (API Manager), Gateway, and Cache Server.

API Manager (API Manager): The API Manager is a web-based management interface that allows the definition of APIs, policies, users, credentials, and configurations. It also provides the capability to view and analyze API traffic and analytics data.

Gateway Server: The Gateway Server is the most critical component of Apinizer. It serves as the entry point for incoming client requests and functions as the Policy Enforcement Point (PEP). It processes incoming requests in accordance with defined policies, directing them to the appropriate Backend API/Service. Moreover, it can act as a load balancer during routing, and TLS/SSL termination is conducted within this module. The Gateway Server also processes the responses from the Backend API/Service in alignment with specified policies before sending them back to the clients. All activities are logged and asynchronously transmitted to the log server. Additionally, sensitive data is recorded in compliance with predetermined rules (such as deletion, masking, or encryption). Each Gateway is associated with an Environment, and its settings are tailored to the respective operating Environment. Apinizer supports multiple Environments, and within each Environment, there may be several Gateways.

Cache Server: The Cache Server manages data shared between components by storing it in a distributed cache, which leads to performance improvements.

After deploying Apinizer images to the Kubernetes environment, you need to add the License Key provided by Apinizer to the database.

Apinizer installation proceeds with the API Manager's installation first, followed by defining the environments where Gateway and Cache Server will operate.

2) Pre-Installation Steps

Before starting the Apinizer installation, it is required to have Kubernetes Cluster, Replicaset MongoDB on your servers, and optionally, Elasticsearch should be installed if you plan to manage API Traffic and Analytical data through Apinizer.

If Kubernetes, MongoDB, and Log Servers are already set up in your environment, you can skip this section.

- For Kubernetes installation Kubernetes Installation.

- For MongoDB installation MongoDB Installation.

- For Elasticsearch installation Elasticsearch Installation.

- For Cloud installation Cloud Environments Installation.

- For Offline installation Offline Installation.

3) Installation and Configurations

3.1) Defining Kubernetes Authorizations and Creating Namespaces

Apinizer needs to define permissions for Kubernetes API to access pods in the created Namespace.

In Kubernetes, ClusterRole and ClusterRoleBinding provide Kubernetes cluster-level role and role assignment mechanisms. These two resources enable cluster administrators and application developers to manage access and permissions to Kubernetes resources.

If Environment management is to be done through API Manager, authorizations must be defined for the Apinizer to access Kubernetes APIs and perform Namespace, Deployment, Pod, Service creation, deletion, update and monitoring operations.

3.1.1) If Kubernetes management is done with Apinizer

In the following step, create Role and RoleBinding on Kubernetes and define authorizations. Authorization is given for all environments to be created in this step.

vi apinizer-role.yaml

YML

POWERSHELL

|

3.1.2) If Kubernetes management is not done with Apinizer

Here only the authorizations for the manager application in the Apinizer namespace are set.

vi apinizer-manager-role.yaml

YML

POWERSHELL

|

3.2) API Manager (API Manager) Installation

API Manager is a web-based management interface where APIs, policies, users, credentials, and configurations are defined, and API traffic and analytical data can be viewed and analyzed.

Before deploying API Manager to Kubernetes, configure the following variables according to your environment.

APINIZER_VERSION- The parameter that specifies which Apinizer version to install. Please click to see the current versions. It is recommended to always use the latest version for new installations. Click to review the release notes.MONGO_DBNAME- The database URL information to be used for Apinizer configurations. It is recommended to use the default name "apinizerdb".MONGOX_IP veMONGOX_PORT-IP and port information for MongoDB servers. The default port for MongoDB is 27017.MONGO_USERNAME veMONGO_PASSWORD -Information related to the user authorized for Apinizer on the designated MongoDB application, including the user's permissions on the relevant database or authority to create that database.YOUR_LICENSE_KEY -The license key sent to you by Apinizer.K8S_ANY_WORKER_IP -Upon completion of the Apinizer installation, you will need an IP from your Kubernetes Clustor to access the Apinizer API Manager through any web browser. This is typically chosen as one of the Kubernetes Worker servers and is later recommended to be placed behind a Load Balancer and DNS.

3.2.1) Creating secret with mongodb information

For your MongoDB database connection information, it is recommended to have them encoded in Kubernetes deployments. To achieve this, follow the steps below ina terminal on a Linux-based operating system.

DB_URL='mongodb://<MONGO_USERNAME>:<MONGO_PASSWORD>@<MONGO1_IP>:<MONGO1_PORT>,<MONGO2_IP>:<MONGO2_PORT>,<MONGO3_IP>:<MONGO3_PORT>/?authSource=admin&replicaSet=apinizer-replicaset'

DB_NAME=<MONGO_DBNAME>

//For the <MONGO_DBNAME> variable, our default recommendation is the name "apinizerdb"

echo -n ${DB_URL} | base64

//In the next step, we will replace it with the <ENCODED_URL> variable

echo -n ${DB_NAME} | base64

//In the next step, we will replace it with the <ENCODED_DB_NAME> variable

vi secret.yamlUsage of encoded db information in yaml

apiVersion: v1

kind: Secret

metadata:

name: mongo-db-credentials

namespace: apinizer

type: Opaque

data:

dbUrl: <ENCODED_URL>

dbName: <ENCODED_DB_NAME>Java Memory information that JAVA_OPTS API Manager will use in the operating system

kubectl apply -f secret.yaml

3.2.2) Kubernetes deployment of API Manager

Modify the sample yaml file below to suit your systems and upload it to your Kubernetes Cluster.

vi apinizer-apimanager-deployment.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: apimanager

namespace: apinizer

spec:

replicas: 1

selector:

matchLabels:

app: apimanager

version: v1

strategy:

type: Recreate

template:

metadata:

labels:

app: apimanager

version: v1

spec:

containers:

- env:

- name: JAVA_OPTS

value: '-XX:MaxRAMPercentage=75.0 -Dlog4j.formatMsgNoLookups=true'

- name: SPRING_PROFILES_ACTIVE

value: prod

- name: SPRING_SERVLET_MULTIPART_MAX_FILE_SIZE

value: "70MB"

- name: SPRING_SERVLET_MULTIPART_MAX_REQUEST_SIZE

value: "70MB"

- name: WORKER_DEPLOYMENT_TIMEOUT

value: '120'

- name: SPRING_DATA_MONGODB_URI

valueFrom:

secretKeyRef:

key: dbUrl

name: mongo-db-credentials

- name: SPRING_DATA_MONGODB_DATABASE

valueFrom:

secretKeyRef:

key: dbName

name: mongo-db-credentials

name: apimanager

image: apinizercloud/apimanager:<APINIZER_VERSION>

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

protocol: TCP

resources:

limits:

cpu: 1

memory: 3Gi

startupProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

readinessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

livenessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

dnsPolicy: ClusterFirst

restartPolicy: Always

hostAliases:

- ip: "<IP_ADDRESS>"

hostnames:

- "<DNS_ADDRESS_1>"

- "<DNS_ADDRESS_2>"If the environments will not be managed through Apinizer, the deployment of the manager is changed

In order for Deployment objects to bind to the required ServiceAccount, the serviceAccountName field is added to the spec field as follows:

spec:

serviceAccountName: manager-serviceaccount

Environment Variables

Apinizer API Manager works from the Spring Boot infrastructure. In Spring Boot, Environment variables are usually expressed using underscores (_) and capitalized. So, for example, you may need to use underscores when setting spring.servlet.multipart.max-file-size and spring.servlet.multipart.max-request-size as environment variables.

Ex: You can define SPRING_SERVLET_MULTIPART_MAX_FILE_SIZE and SPRING_SERVLET_MULTIPART_MAX_REQUEST_SIZE as environment variables.

env:

- name: SPRING_SERVLET_MULTIPART_MAX_FILE_SIZE

value: "70MB"

- name: SPRING_SERVLET_MULTIPART_MAX_REQUEST_SIZE

value: "70MB"

If you are using a proxy server such as NGINX and want to increase the file upload limit, you need to add the following setting to the NGINX configuration file:

http {

...

client_max_body_size 70M; # 70MB limit

...

}

Environment Variables

Deployment operations are performed synchronously to ensure data integrity. WORKER_DEPLOYMENT_TIMEOUT parameter indicates after how many seconds the deployment operation performed via API Manager or Management API will time out.

env:

- name: WORKER_DEPLOYMENT_TIMEOUT

value: '120'

Create Kubernetes Service for API Manager:

vi apinizer-apimanager-service.yamlapiVersion: v1

kind: Service

metadata:

name: apimanager

namespace: apinizer

labels:

app: apimanager

spec:

selector:

app: apimanager

type: NodePort

ports:

- name: http

port: 8080

nodePort: 32080kubectl apply -f apinizer-apimanager-deployment.yaml

kubectl apply -f apinizer-apimanager-service.yamlDuring the deployment of API Manager on Kubernetes, it creates a Kubernetes service named "apimanager" with the type NodePort. This service is required for accessing API Manager from outside the Kubernetes cluster. However, you can adapt this service according to your organization's needs by either removing it and using Ingress or adjusting it to the connection method used in your environment.

After this process, run the first code below to get the name of the created pod and use it in the second code to track and inspect its logs.

kubectl get pods -n apinizer

kubectl logs <PODNAME> -n apinizerAfter deploying Apinizer images to the Kubernetes environment, you need to add the license key provided by Apinizer to the database.

3.2.3) Entering the API Manager license key

You can update the License Key provided by Apinizer in a .js file as shown below, and then the license information in the database can be updated.

vi license.jsdb.general_settings.updateOne(

{"_class":"GeneralSettings"},

{ $set: { licenseKey: '<YOUR_LICENSE_KEY>'}}

)The created license.js is executed on the MongoDB server. It is expected to see a result as Matched = 1.

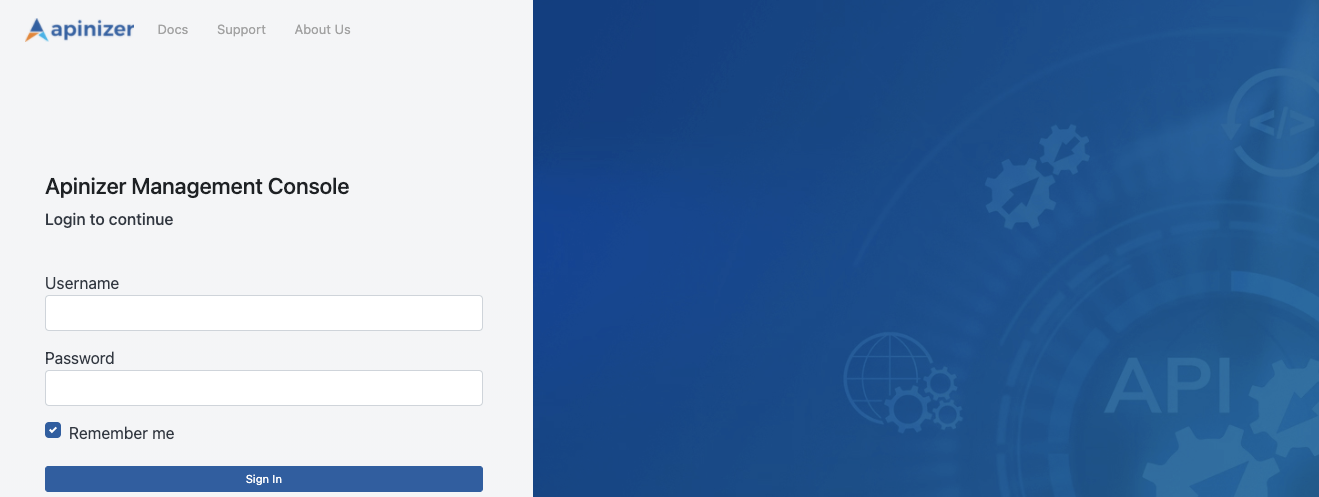

mongosh mongodb://<MONGODB_IP>:<MONGO_PORT>/<MONGO_DBNAME> --authenticationDatabase "admin" -u "apinizer" -p '<MONGO_PASSWORD>' < license.jsIf the installation process is successful, you can access the Apinizer API Manager (API Manager) at the following address.

http://<K8S_ANY_WORKER_IP>:32080Default Username: admin

Default User Password: Ask for assistance from the Apinizer support team.

It is recommended to change your password after your initial login to the Apinizer API Manager.

3.2.4) Launching Apinizer Manager with SSL

You can do this in the guide at Initializing API Manager with SSL.

3.3) Settings to be Made on the Connection Management Page

The information about where the traffic logs flowing through Apinizer will be sent needs to be defined in Apinizer. This definition is made through Connectors on the Connection Configurations page. If you don't have a specific preference, you can use an Elasticsearch connector set up from Apinizer for data management to fully benefit from Apinizer's Analytics and Monitoring capabilities.

For these processes, you can define the connections for the applications you will use on the Connection Management pages under the System Settings → Connection Management tab.

If you will manage your API Traffic and API Analytics data with your own log systems, you can define the suitable integration settings from the options provided.

3.4) Settings to be Made on the General Settings Page

Go to System Settings → General Settings page, and here;

- Whether a value will be appended to the addresses defined for the relevant Worker environments when providing services through the system,

- Whether you will manage the Kubernetes environment where Apinizer is located through Apinizer or not,

- Whether logs of error messages will be sent to connected connectors even if log settings are turned off,

- Settings related to login and session durations in the API Manager interface,

- The number of rollback points to be maintained for each proxy,

- Applications where application logs and token logs will be stored/sent.

Changes related to these can be made. Appropriate definitions for your organization should be made here.

For detailed information about this page, click here.

3.5) Settings to be Made on the Gateway Environment Page

In the System Settings → Gateways page, at least one environment (environment) should be created and published.

By giving a suitable environment name, settings are entered for containers with resources suitable for your license and server quantity. This environment name will also be the Kubernetes namespace where the applications in the respective environment will run. Then, by defining connectors for the environments where you want to write logs, logging is enabled for the environments.

3.5.1) If Kubernetes management is done with Apinizer

For detailed information about the Gateway Environments page, click here.

On the page that opens with a new environment option, general settings such as which namespace to create the environment in, which address to open it from, which connectors to connect, resources and JVM parameters of worker and cache applications are set and the environment is published.

3.5.2) If Kubernetes management is not done with Apinizer

For detailed information about Manual Management of Gateway Environments, click here.

In the necessary role assignments, the namespace where worker and cache will run is created and authorizations are set in this namespace, two deployment files named worker and cache and kubernetes services should be created to access the pods that will be created after these deployments.

3.5.2.1) Create roles and rolebindings for Worker and Cache

The names of the environments to be created should be determined in advance and the <WORKER_CACHE_NAMESPACE> variables should be set accordingly and the following steps should be applied for each environment to be created.

vi apinizer-worker-cache-role-ns.yamlapiVersion: v1

kind: Namespace

metadata:

name: <WORKER_CACHE_NAMESPACE>

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: worker-cache-role

namespace: <WORKER_CACHE_NAMESPACE>

rules:

- apiGroups:

- ''

resources:

- services

- namespaces

- pods

- endpoints

- pods/log

- secrets

verbs:

- get

- list

- watch

- update

- create

- patch

- delete

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: manager-serviceaccount-worker-cache-role-binding

namespace: <WORKER_CACHE_NAMESPACE>

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: worker-cache-role

subjects:

- kind: ServiceAccount

name: manager-serviceaccount

namespace: <WORKER_CACHE_NAMESPACE>vi apinizer-worker-cache-rolebinding.yamlapiVersion: v1

kind: ServiceAccount

metadata:

name: worker-cache-serviceaccount

namespace: <WORKER_CACHE_NAMESPACE>

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: worker-cache-serviceaccount-apinizer-role-binding

namespace: apinizer

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: apinizer-role

subjects:

- kind: ServiceAccount

name: worker-cache-serviceaccount

namespace: <WORKER_CACHE_NAMESPACE>

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: worker-cache-serviceaccount-worker-cache-role-binding

namespace: <WORKER_CACHE_NAMESPACE>

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: worker-cache-role

subjects:

- kind: ServiceAccount

name: worker-cache-serviceaccount

namespace: <WORKER_CACHE_NAMESPACE>kubectl apply -f apinizer-worker-cache-role-ns.yaml

kubectl apply -f apinizer-worker-cache-rolebinding.yaml3.5.2.2) Create Worker and Cache deployments

vi apinizer-worker-deployment.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: worker

namespace: <WORKER_CACHE_NAMESPACE>

spec:

replicas: 1

selector:

matchLabels:

app: worker

strategy:

type: "RollingUpdate"

rollingUpdate:

maxUnavailable: 75%

maxSurge: 1

template:

metadata:

labels:

app: worker

spec:

serviceAccountName: worker-cache-serviceaccount

containers:

- name: worker

image: apinizercloud/worker:<APINIZER_VERSION>

imagePullPolicy: IfNotPresent

env:

- name: JAVA_OPTS

value: -server -XX:MaxRAMPercentage=75.0 -Dhttp.maxConnections=4096 -Dlog4j.formatMsgNoLookups=true

- name: tuneWorkerThreads

value: "1024"

- name: tuneWorkerMaxThreads

value: "4096"

- name: tuneBufferSize

value: "16384"

- name: tuneIoThreads

value: "4"

- name: tuneBacklog

value: "10000"

- name: tuneRoutingConnectionPoolMaxConnectionPerHost

value: "1024"

- name: tuneRoutingConnectionPoolMaxConnectionTotal

value: "4096"

- name: SPRING_DATA_MONGODB_DATABASE

value: null

valueFrom:

secretKeyRef:

name: mongo-db-credentials

key: dbName

- name: SPRING_DATA_MONGODB_URI

value: null

valueFrom:

secretKeyRef:

name: mongo-db-credentials

key: dbUrl

- name: SPRING_PROFILES_ACTIVE

value: prod

lifecycle:

preStop:

exec:

command:

- /bin/sh

- -c

- sleep 10

livenessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8091

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

ports:

- containerPort: 8091

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8091

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

resources:

limits:

cpu: 4

memory: 4Gi

startupProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8091

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

restartPolicy: Always

hostAliases:

- ip: "<IP_ADDRESS>"

hostnames:

- "<DNS_ADDRESS_1>"

- "<DNS_ADDRESS_2>"http2Enabled

If the Gateway type is determined as HTTP+Websocket, it is recommended to enter the http2 Enabled parameter as false. see.

- name: http2Enabled

value: "false"

If you want to expose the Worker application with HTTPS, the port value is entered as 8443 and the schema value is entered as HTTPS under livenessProbe, readinessProbe and startupProbe in yaml.

The spec.selector.matchLabels.app and spec.template.metadata.labels.app labels within the Deployment ensure that apinizer can correctly identify and manage the worker pods. Modifying these labels may prevent the correct selection of pods and disrupt the operation of the system. Therefore, the values of these labels should not be changed.

vi apinizer-cache-deployment.yamlapiVersion: apps/v1

kind: Deployment

metadata:

name: cache

namespace: <WORKER_CACHE_NAMESPACE>

spec:

replicas: 1

selector:

matchLabels:

app: cache

strategy:

type: "RollingUpdate"

rollingUpdate:

maxUnavailable: 75%

maxSurge: 1

template:

metadata:

labels:

app: cache

spec:

serviceAccountName: worker-cache-serviceaccount

containers:

- name: cache

image: apinizercloud/cache:<APINIZER_VERSION>

imagePullPolicy: IfNotPresent

env:

- name: JAVA_OPTS

value: -server -XX:MaxRAMPercentage=75.0 -Dhttp.maxConnections=1024 -Dlog4j.formatMsgNoLookups=true

- name: SPRING_PROFILES_ACTIVE

value: prod

- name: SPRING_DATA_MONGODB_DATABASE

value: null

valueFrom:

secretKeyRef:

name: mongo-db-credentials

key: dbName

- name: SPRING_DATA_MONGODB_URI

value: null

valueFrom:

secretKeyRef:

name: mongo-db-credentials

key: dbUrl

- name: CACHE_SERVICE_NAME

value: cache-hz-service

- name: CACHE_QUOTA_TIMEZONE

value: +03:00

- name: SERVER_TOMCAT_MAX_THREADS

value: "1024"

- name: SERVER_TOMCAT_MIN_SPARE_THREADS

value: "512"

- name: SERVER_TOMCAT_ACCEPT_COUNT

value: "512"

- name: SERVER_TOMCAT_MAX_CONNECTIONS

value: "1024"

- name: SERVER_TOMCAT_CONNECTION_TIMEOUT

value: "20000"

- name: SERVER_TOMCAT_KEEPALIVE_TIMEOUT

value: "60000"

- name: SERVER_TOMCAT_MAX_KEEPALIVE_REQUESTS

value: "10000"

- name: SERVER_TOMCAT_PROCESSOR_CACHE

value: "512"

- name: HAZELCAST_IO_WRITE_THROUGH

value: "false"

- name: HAZELCAST_MAP_LOAD_CHUNK_SIZE

value: "10000"

- name: HAZELCAST_MAP_LOAD_BATCH_SIZE

value: "10000"

- name: HAZELCAST_CLIENT_SMART

value: "true"

- name: HAZELCAST_MAPCONFIG_BACKUPCOUNT

value: "1"

- name: HAZELCAST_MAPCONFIG_READBACKUPDATA

value: "false"

- name: HAZELCAST_MAPCONFIG_ASYNCBACKUPCOUNT

value: "0"

- name: HAZELCAST_OPERATION_RESPONSEQUEUE_IDLESTRATEGY

value: "block"

- name: HAZELCAST_MAP_WRITE_DELAY_SECONDS

value: "5"

- name: HAZELCAST_MAP_WRITE_BATCH_SIZE

value: "100"

- name: HAZELCAST_MAP_WRITE_COALESCING

value: "true"

- name: HAZELCAST_MAP_WRITE_BEHIND_QUEUE_CAPACITY

value: "100000"

ports:

- containerPort: 8090

- containerPort: 5701

resources:

limits:

cpu: 1

memory: 1024Mi

lifecycle:

preStop:

exec:

command:

- /bin/sh

- -c

- sleep 10

livenessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8090

scheme: HTTP

initialDelaySeconds: 120

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

readinessProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8090

scheme: HTTP

initialDelaySeconds: 120

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

startupProbe:

failureThreshold: 3

httpGet:

path: /apinizer/management/health

port: 8090

scheme: HTTP

initialDelaySeconds: 120

periodSeconds: 30

successThreshold: 1

timeoutSeconds: 30

restartPolicy: Always

hostAliases:

- ip: "<IP_ADDRESS>"

hostnames:

- "<DNS_ADDRESS_1>"

- "<DNS_ADDRESS_2>"kubectl apply -f apinizer-worker-deployment.yaml

kubectl apply -f apinizer-cache-deployment.yamlEnvironment Variables

These environment variables are added to the YAML file to configure Tomcat's thread and connection management and Hazelcast's data loading, backup, and write-behind behaviors.

env:

# Tomcat Settings

- name: SERVER_TOMCAT_MAX_THREADS

value: "1024" # Maximum number of concurrent threads that Tomcat can handle

- name: SERVER_TOMCAT_MIN_SPARE_THREADS

value: "512" # Minimum number of idle threads that Tomcat keeps ready

- name: SERVER_TOMCAT_ACCEPT_COUNT

value: "512" # Maximum number of incoming connections that can be queued when all threads are busy

- name: SERVER_TOMCAT_MAX_CONNECTIONS

value: "2048" # Maximum number of simultaneous connections that Tomcat can accept

- name: SERVER_TOMCAT_CONNECTION_TIMEOUT

value: "20000" # Connection timeout duration in milliseconds

- name: SERVER_TOMCAT_KEEPALIVE_TIMEOUT

value: "60000" # Keep-alive connection timeout duration in milliseconds

- name: SERVER_TOMCAT_MAX_KEEPALIVE_REQUESTS

value: "10000" # Maximum number of requests that can be processed per keep-alive connection

- name: SERVER_TOMCAT_PROCESSOR_CACHE

value: "512" # Maximum number of processors to cache in the processor cache

# Hazelcast Settings

- name: HAZELCAST_IO_WRITE_THROUGH

value: "false" # Indicates whether Hazelcast write-through mode is enabled

- name: HAZELCAST_MAP_LOAD_CHUNK_SIZE

value: "10000" # Chunk size to use when loading maps

- name: HAZELCAST_MAP_LOAD_BATCH_SIZE

value: "10000" # Batch size to use when loading maps

- name: HAZELCAST_CLIENT_SMART

value: "true" # Whether the Hazelcast client uses smart routing

- name: HAZELCAST_MAPCONFIG_BACKUPCOUNT

value: "1" # Number of backup copies for map data

- name: HAZELCAST_MAPCONFIG_READBACKUPDATA

value: "false" # Whether data can be read from backup copies

- name: HAZELCAST_MAPCONFIG_ASYNCBACKUPCOUNT

value: "0" # Number of asynchronous backup copies

- name: HAZELCAST_OPERATION_RESPONSEQUEUE_IDLESTRATEGY

value: "block" # Idle strategy for the operation response queue (e.g., block, busyspin, backoff)

- name: HAZELCAST_MAP_WRITE_DELAY_SECONDS

value: "5" # Delay in seconds before write-behind is triggered

- name: HAZELCAST_MAP_WRITE_BATCH_SIZE

value: "100" # Batch size for write-behind operations

- name: HAZELCAST_MAP_WRITE_COALESCING

value: "true" # Whether coalescing is enabled for write-behind operations

- name: HAZELCAST_MAP_WRITE_BEHIND_QUEUE_CAPACITY

value: "100000" # Maximum capacity of the write-behind queue

HAZELCAT IDLESTRATEGY

If you set the parameter HAZELCAST_OPERATION_RESPONSEQUEUE_IDLESTRATEGY to “backoff”: The pod will continuously use 90-100% of its CPU limit. This may provide a 5-10% performance increase, but it will consume the CPU resources of the Cache pod.

3.5.2.3) Create services for Worker and Cache

vi apinizer-worker-service.yamlapiVersion: v1

kind: Service

metadata:

name: worker-management-api-http-service

namespace: <WORKER_CACHE_NAMESPACE>

spec:

ports:

- port: 8091

protocol: TCP

targetPort: 8091

selector:

app: worker

type: ClusterIP

# If the communication protocol type of your gateway is HTTP or websocket

---

apiVersion: v1

kind: Service

metadata:

name: worker-http-service

namespace: <WORKER_CACHE_NAMESPACE>

spec:

ports:

- nodePort: 30080

port: 8091

protocol: TCP

targetPort: 8091

selector:

app: worker

type: NodePort

# If the communication protocol type of your gateway is gRPC

---

apiVersion: v1

kind: Service

metadata:

name: worker-grpc-service

namespace: <WORKER_CACHE_NAMESPACE>

spec:

ports:

- nodePort: 30152

port: 8094

protocol: TCP

targetPort: 8094

selector:

app: worker

type: NodePortIf you want to serve the Worker with HTTPS, the port and targetPort values should be given as 8443 in the yaml above.

vi apinizer-cache-service.yamlapiVersion: v1

kind: Service

metadata:

name: cache-http-service

namespace: <WORKER_CACHE_NAMESPACE>

spec:

ports:

- port: 8090

protocol: TCP

targetPort: 8090

selector:

app: cache

type: ClusterIP

---

apiVersion: v1

kind: Service

metadata:

name: cache-hz-service

namespace: <WORKER_CACHE_NAMESPACE>

spec:

ports:

- port: 5701

protocol: TCP

targetPort: 5701

selector:

app: cache

type: ClusterIP3.5.2.4) Copying Mongodb secret from Apinizer namespaces to newly created namespaces

The Gateway and Cache Server applications will also connect to MongoDB. Therefore, the secret created for the Manager application is copied to other namespaces. The following example copies a secret from the "apinizer" namespace to the used namespace.

kubectl get secret mongo-db-credentials -n apinizer -o yaml | sed 's/namespace: apinizer/namespace: <WORKER_CACHE_NAMESPACE>/' | kubectl create -f -Upload the generated .yaml files to the kubernetes environment.

kubectl apply -f apinizer-worker-service.yaml

kubectl apply -f apinizer-cache-service.yamlAfter installing Worker and Cache applications in your Kubernetes environment, go to Server Management from Apinizer API Manager and add the Kubernetes Namespace you will create as Environment to Apinizer. The Environment name here should be the same name as your Namespace in Kubernetes.

3.5.3) Adding a Log Connector to the created environments

At least one Log Connector should be connected to the created environments.

For detailed information about adding Log Connectors to environments, click here.

After completing the above steps, go back to the Server Management section in API Manager and update the Environment you defined as published.

3.6) Settings to be Made on the Backup Management/Configuration Page

Backup of Apinizer configuration data and the database where log and token records are stored, if you have configured it on the General Settings page, can be performed by extracting a dump file on the relevant server(s) specified in this setting (if there is more than one).

It is recommended to securely backup this file to a safe server by your organization's system team employees in any case.

For detailed information about this page, click here.

3.7) Other Settings

Please change the password of the user account named "admin" that you used to log in to the Apinizer API Manager during the first login from the Change Password page under the quick menu in the top right, and securely note it. For detailed information about the Users page where user management is done, click here.

Many feature logs you will use in Apinizer are written to the database where configuration data is kept. If this information is from logs that are not required according to your organization's policies, click here for detailed information about what these data are and how to keep this growth under control.

If you are using Elasticsearch in the Apinizer management for Apinizer traffic logs and prefer to take snapshots at certain intervals for backup, click here for detailed information on performing these operations.

It is strongly recommended to open the ports where Worker environments are opened and to perform DNS forwarding to the servers they run on during Apinizer installation. For this, your organization's employees should be informed of which servers and ports Apinizer is opened from and which addresses should be accessed with which DNS.

If your organization is part of the "kamunet", and Apinizer will directly access the "kamunet", the exits of Apinizer servers must be able to go out as if they were your "kamunet" IP. This process called NAT should be configured by your organization's firewall administrators.

If your organization wants to use the KPS (Identity Sharing System) services offered by the General Directorate of Population and Citizenship Affairs through Apinizer, the kps information specific to your organization must be entered into the API Manager from the KPS Settings page.

Congratulations! If you've made it this far, it means the Apinizer installation and settings are complete.