Documentation Index

Fetch the complete documentation index at: https://docs.apinizer.com/llms.txt

Use this file to discover all available pages before exploring further.

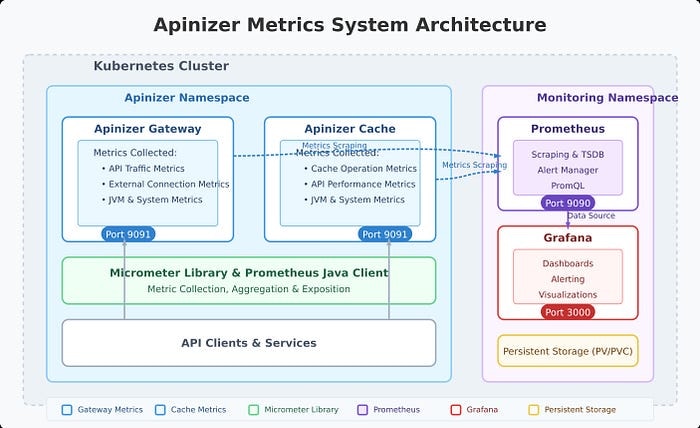

Overview of Apinizer’s Metric System

- Apinizer Gateway: Collects metrics related to API traffic, external connections, JVM health, and system resources

- Apinizer Cache: Tracks cache operations, API requests, JVM performance, and system health

/api/v1/query REST API through the HTTP Agent item type. This way, your existing Prometheus infrastructure becomes a Zabbix data source without the need for an additional Zabbix-Prometheus plugin.

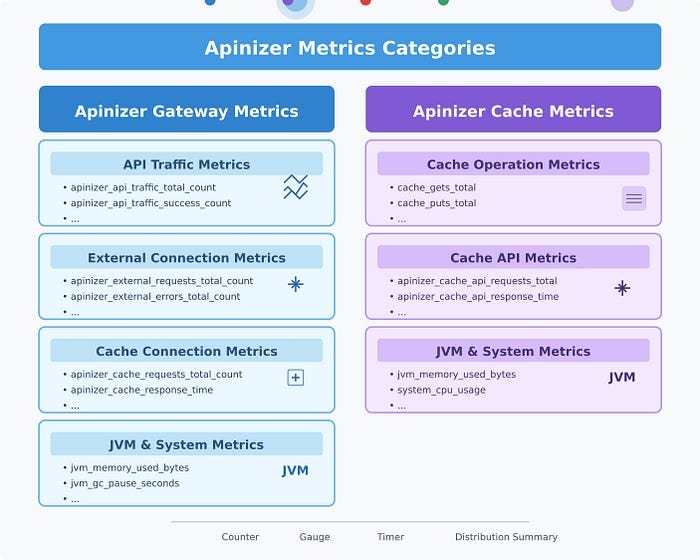

Metrics Collected by Apinizer

Apinizer Gateway Metrics

The Gateway component collects metrics across several categories:API Traffic Metrics

These metrics track requests passing through the Apinizer Gateway:- Total API traffic requests

- Successful/failed/blocked API requests

- Request processing times (pipeline, routing, total)

- Request and response sizes

- Cache hit statistics

- Aggregate metrics (e.g., total API requests across all APIs)

- Tagged metrics with detailed dimensions (e.g., requests per API ID, per API name)

External Connection Metrics

These track connections made to external services:- Total external requests

- External error counts

- External response times

JVM Metrics

These provide insights into the Java Virtual Machine:- Memory usage (heap, non-heap)

- Garbage collection statistics

- Thread counts and states

System Metrics

These monitor the underlying system:- CPU usage

- Processor count

- System load average

- File descriptor counts

Apinizer Cache Metrics

The Cache component collects:Cache Operation Metrics

- Cache get/put counts

- Cache size and entry counts

- Cache operation latencies

- Memory usage by cache entries

API Metrics

- API request counts

- API response times

- API error counts

JVM and System Metrics

Similar to the Gateway, the Cache component also tracks JVM performance and system resource usage.Setting Up the Zabbix Integration

1. Enabling Metrics on Apinizer Components

For Apinizer Gateway:

Edit the worker deployment and add theMETRICS_ENABLED=true environment variable. The container spec also needs port 9091 to be added.

For Apinizer Cache:

Edit the cache deployment and add theMETRICS_ENABLED=true environment variable.

Through the Kubernetes CLI:

2. Configuring Prometheus

For Apinizer pods to be scraped by Prometheus, you need to add annotations to the worker and cache deployments and configure Prometheus to discover those pods.Add pod annotations:

Extend the Prometheus scrape config to cover the Apinizer namespaces:

3. Making Prometheus Reachable from Zabbix

The Zabbix Server needs to reach the Prometheus HTTP endpoint. The simplest method is to expose it via NodePort:4. Preparing the Zabbix Side

Zabbix Agent installation

Install the Zabbix Agent on the Kubernetes node where Apinizer runs. This way, host-level metrics (CPU, RAM, disk) are collected alongside Apinizer metrics:Import the Zabbix Template

The HTTP Agent-based template prepared for Apinizer (41 items, 8 triggers, 9 macros) is imported through the Zabbix interface: From Data collection → Templates → Import, select theapinizer_by_prometheus.yaml file. Under Advanced options, check Update existing, Create new, and Delete missing for all categories, then click Import.

Create a host and configure macros

Create a Zabbix host for the Apinizer environment:- Host name:

<node-hostname>(same as the Zabbix Agent) - Templates:

Linux by Zabbix agentandApinizer by Prometheus - Macros (override from the Inherited and host macros tab):

{$PROM.URL}→http://<cluster-node-ip>:30190{$PROM.NAMESPACE}→tester(or the target namespace)

Analyzing Apinizer Metrics with PromQL

Zabbix HTTP Agent items send PromQL queries to the Prometheus/api/v1/query endpoint and extract the value from the JSON response using JSONPath. The queries below are both the queries the template uses internally and examples you can use when defining a new item in Zabbix:

Gateway API Traffic Analysis

Cache Performance Analysis

JVM Analysis

Creating Zabbix Dashboards

Zabbix dashboards can be created in a single shot through a Python script using thedashboard.create API call. With the prepared script, a 5-page, 72-widget dashboard is produced in one API call. The contents of each page are summarized below.

Panel 1: Apinizer UP

- Item:

Apinizer apimanager availability(TCP service check) - Visualization: Item value (1 = up, 0 = down)

- Metric:

(sum(rate(apinizer_api_traffic_success_count_total[5m])) / sum(rate(apinizer_api_traffic_total_count_total[5m]))) * 100 - Visualization: Gauge (green 95+, yellow 90-95, red below 90)

- Metric: blocked / total * 100

- Visualization: Gauge (yellow 10+, red 30+)

- Metric: cache_hits / total * 100

- Visualization: Gauge (blue tones)

- Metrics: total / success / error / blocked rates

- Visualization: Graph (classic), 4 lines

- All active triggers attached to the host

- Visualization: Problems widget

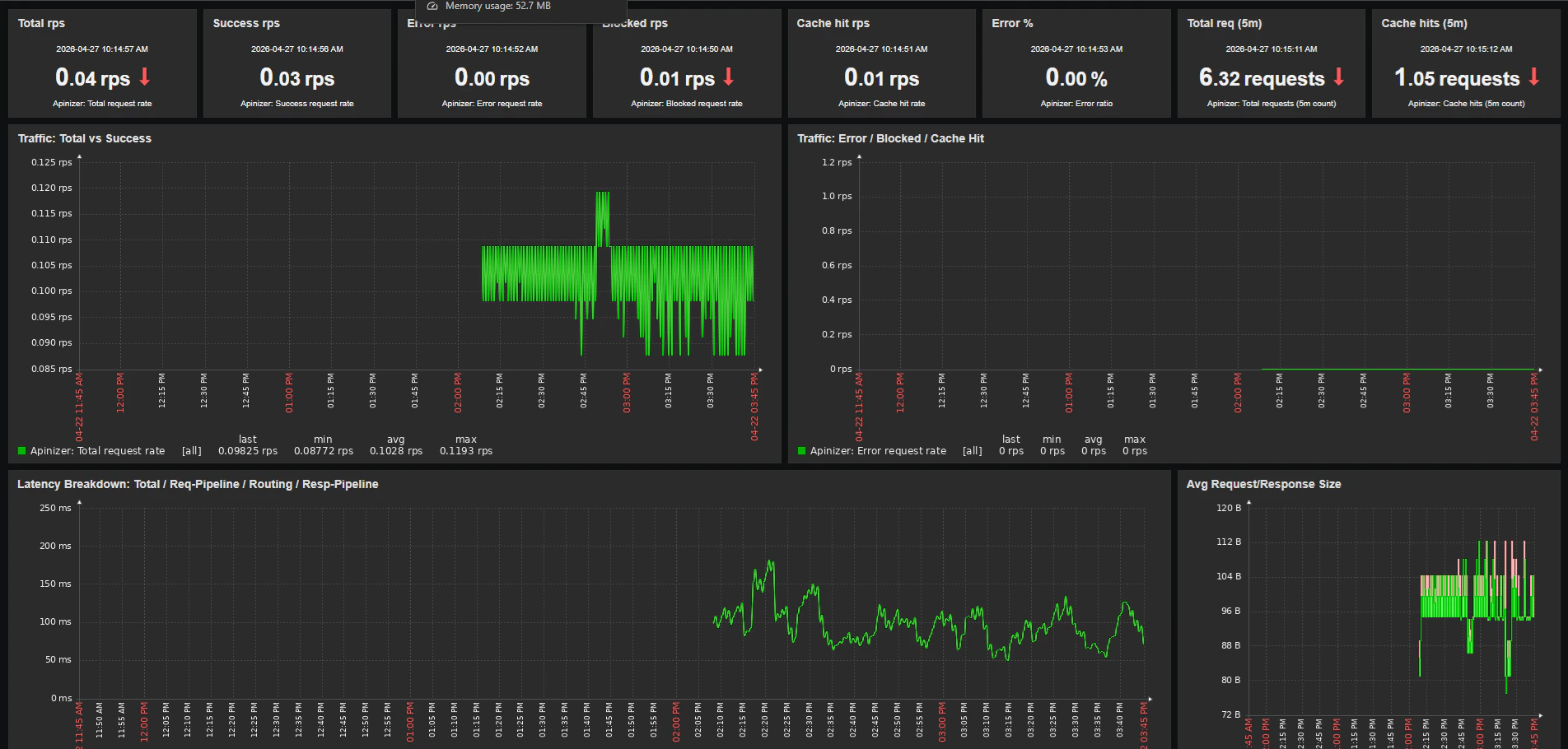

Traffic & Latency Page

Panel 1: Latency Breakdown- Metrics:

- Total response time:

1000 * sum(rate(apinizer_api_traffic_total_time_seconds_sum[5m])) / sum(rate(apinizer_api_traffic_total_time_seconds_count[5m])) - Request pipeline: same formula with

request_pipeline_time_seconds - Routing: same formula with

routing_time_seconds - Response pipeline: same formula with

response_pipeline_time_seconds - Visualization: Graph (classic), 4 lines

- Request size:

sum(rate(apinizer_api_traffic_request_size_bytes_sum[5m])) / sum(rate(apinizer_api_traffic_request_size_bytes_count[5m])) - Response size:

sum(rate(apinizer_api_traffic_response_size_bytes_sum[5m])) / sum(rate(apinizer_api_traffic_response_size_bytes_count[5m])) - Visualization: Graph (classic)

- Metric:

sum(increase(apinizer_api_traffic_total_count_total[5m])) - Visualization: Item value

JVM & Infrastructure Page

Panel 1: JVM Heap %- Metric:

sum(jvm_memory_used_bytes{area="heap"}) / sum(jvm_memory_max_bytes{area="heap"}) * 100 - Visualization: Gauge (yellow 70+, red 85+)

- Metric 1 (tester):

sum(jvm_memory_used_bytes{namespace="tester",application="apinizer",area="heap"}) / 1024 / 1024 - Metric 2 (zwebsocket): same query with a different namespace

- Visualization: Graph (classic), 2 lines in different colors

- Metric:

sum(rate(jvm_gc_pause_seconds_sum[5m])) - Visualization: Graph (classic)

- Metric:

sum(httpcomponents_httpclient_pool_total_connections) / sum(httpcomponents_httpclient_pool_total_max) * 100 - Visualization: Gauge

- Metrics:

- Backend request rate:

sum(rate(apinizer_external_requests_total_count_total[5m])) - Backend error rate:

sum(rate(apinizer_external_errors_total_count_total[5m])) - Visualization: Graph (classic)

Cache Pod Page

Panel 1: Cache Pod Heap %- Metric:

sum(jvm_memory_used_bytes{application="apinizer-cache",area="heap"}) / sum(jvm_memory_max_bytes{application="apinizer-cache",area="heap"}) * 100 - Visualization: Gauge

- Metric:

sum(jvm_threads_live_threads{application="apinizer-cache"}) - Visualization: Item value

- Metric:

sum(rate(apinizer_cache_errors_total_count_total[5m])) - Visualization: Graph (classic)

Host Health Page

This page shows OS-level metrics of the Kubernetes node where Apinizer runs. The data comes from theLinux by Zabbix agent template; it is collected directly from the Zabbix Agent, not through Prometheus.

Panel 1: CPU Utilization

- Item:

system.cpu.utilfrom the Linux template - Visualization: Gauge and Graph (classic)

- Item:

vm.memory.utilization - Visualization: Gauge and Graph (classic)

- Item:

system.cpu.load[all,avg1] - Visualization: Graph (classic)

Zabbix Triggers (Alarms)

The Apinizer template ships with 8 triggers by default. Each trigger takes its threshold from a separate macro, so it can be customized per host:| Trigger | Default Threshold | Macro |

|---|---|---|

| High error ratio (5m) | 5% | {$APINIZER.ERROR_RATIO.MAX} |

| High total response time (5m) | 2000 ms | {$APINIZER.LATENCY.MAX} |

| High backend error rate (5m) | 1 rps | {$APINIZER.EXT_ERROR.MAX} |

| High JVM heap usage (5m) | 85% | {$APINIZER.HEAP.MAX} |

| High GC pressure (5m) | 0.1 s/s | {$APINIZER.GC.MAX} |

| HTTP pool saturated (5m) | 90% | {$APINIZER.POOL.MAX} |

| No traffic metric data (nodata 5m) | n/a | (no override) |

| Blocked request spike (5m) | 10 rps | {$APINIZER.BLOCKED.MAX} |

Best Practices

1. Metric Retention

On the Zabbix side, history (raw data) and trends (hourly aggregation) durations are configured separately per item. The Apinizer template uses these defaults:- History: 30 days

- Trends: 365 days

2. Multi-Environment Configuration

When Apinizer runs in multiple namespaces (tester, uat, prod), create a separate Zabbix host for each environment. Attach the sameApinizer by Prometheus template to each host and override the {$PROM.NAMESPACE} macro at the host level to point to the target namespace. This way:

- Each environment’s metrics stay isolated

- A separate dashboard can be produced per environment

- Trigger thresholds can be differentiated per environment (e.g., 2% error ratio in prod, 10% in test)

3. Multi-Worker Comparison on the Same Dashboard

To compare two workers (for example, during a canary release) on the same dashboard, additional items pointing to the second worker’s namespace are added to the template. On the JVM & Infrastructure page, both workers’ lines are drawn in the same Heap, Threads, GC, and CPU graphs in different colors. This is the fastest visual method for performance comparison.4. Label Usage

Every metric series Apinizer emits carries the following labels:namespace— the Kubernetes namespace of the podpod— the pod name (changes on restart)application— apinizer (worker) or apinizer-cache (cache pod)environment— Apinizer’s internal environment identifier

application="apinizer" filter; otherwise, the sum() function will aggregate worker and cache metrics together and the result will be misleading.

Conclusion

Integrating Apinizer with Zabbix through Prometheus brings Apinizer-specific visibility into the operational monitoring platform that organizations already use. Metric collection is performed by Prometheus; Zabbix HTTP Agent items query those metrics and incorporate them into Zabbix’s own history/trends/dashboard/trigger ecosystem. This integration leverages the strengths of each component:- Apinizer’s comprehensive metric collection capability

- Prometheus’s efficient time-series database and PromQL query language

- Zabbix’s mature trigger, escalation, and notification routing capabilities

- The ability of the Zabbix Agent to combine OS-level host metrics with Apinizer metrics on the same dashboard

Resources

For more information:- Apinizer Documentation

- Prometheus Documentation

- Zabbix Documentation